Moreover, our FSM-based model reduces the power consumption of training on a GPU by 33% compared to an LSTM model of the same size. All the possible ways an automaton can develop, are represented by a set of matrices, which is formally characterized.Based on this representation, a method to calculate some probabilities of these automata, is given. Therefore, our FSM-based model can learn extremely long-term dependencies as it requires 1/l memory storage during training compared to LSTMs, where l is the number of time steps. The transition probabilities of the stochastic automata we introduce in this paper are dependent upon the number of times the current state has been passed by. Unlike long short-term memories (LSTMs) that unroll the network for each input time step and perform back-propagation on the unrolled network, our FSM-based model requires to backpropagate gradients only for the current input time step while it is still capable of learning long-term dependencies.

#Finite state automata stochastic series

Inspired by the capability of FSMs in processing binary streams, we then propose an FSM-based model that can process time series data when performing temporal tasks such as character-level language modeling. We show that the proposed FSM-based network can synthesize multi-input complex functions such as 2D Gabor filters and can perform non-sequential tasks such as image classifications on stochastic streams with no multiplication since FSMs are implemented by look-up tables only. In this paper, we introduce a method that can train a multi-layer FSM-based network where FSMs are connected to every FSM in the previous and the next layer. and the grammar is finally converted into a finite state automaton. The system makes a transition to state s1 with probability P(1js0 a0) and generates observation o1 with probability P(o1js1 a0). The equations modeling the continuous behavior of the system at a given instant therefore depend on the discrete state in which the system is found (Wolff, 1998). The rst action applied by the agent, as dictated by M, is a0 with probability (q0)(a0). A Hybrid Automaton (Perez, 2009) and (Marzat, 2008) appears as the association of finite state automata piloting a set of continuous dynamic equations.

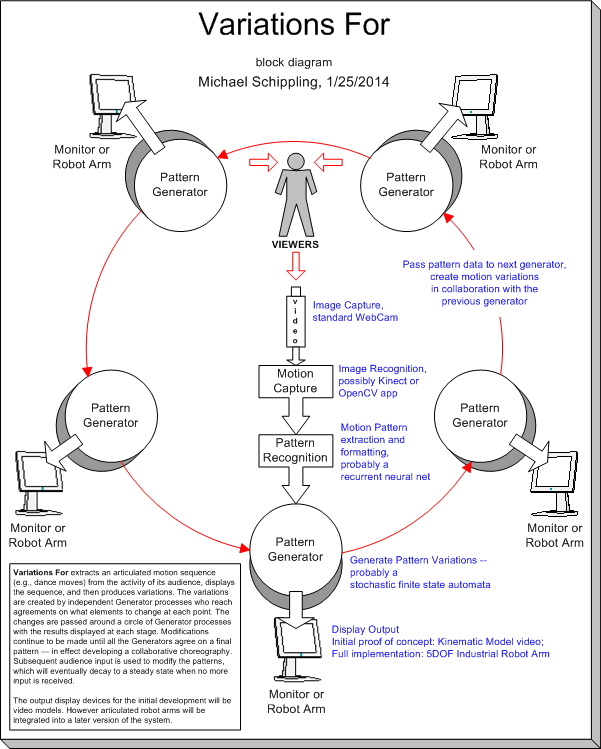

The learning model that we study consists of the stochastic automaton in a feedback. a stochastic Moore machine denoting the stochastic policy for the agent and assume that the environment is initially in state s0. Hence, a single-input linear FSM is conventionally used to implement complex single-input functions, such as tanh and exponentiation functions, in stochastic computing (SC) domain where continuous values are represented by sequences of random bits. Stochastic finite automata are well adapted for the constrained integration of pairs. Such learning automata select the best action out of a finite. AuthorFeedback Bibtex MetaReview Paper Review SupplementalĪrash Ardakani, Amir Ardakani, Warren Gross AbstractĪ finite-state machine (FSM) is a computation model to process binary strings in sequential circuits. automata are coupled, it is clear that the condition in which the state of the plant is within A and that of the stochastic regulator is a 1 is a stable one.